Python is a programming language with many characteristics, such as an intuitive syntax and powerful data structures, which can lead to efficient code. However, one of the drawbacks of Python is that it can be slow compared to other languages. This is often due to the fact that Python is interpreted, rather than compiled like some languages.

Therefore, when running Python code, your computer needs to do more work than it would for a compiled language.

Python is a versatile language that you can use on the backend, frontend, or full stack of a web application. In this post, we will be discussing big O notation and how it applies to Python.

Big O notation is used to measure the time complexity of an algorithm.

The time complexity of an algorithm is the amount of time it takes to run an algorithm as a function of the input size. For example, if an algorithm takes 10 seconds to run on an input size of 100, it would take 100 seconds to run on an input size of 1000. The time complexity can also be expressed as a function of the number of operations performed by the algorithm.

There are three common types of time complexity: constant, linear, and polynomial. Constant time algorithms have a time complexity of O(1), meaning that they will always take the same amount of time to run regardless of the input size. Linear time algorithms have a time complexity of O(n), meaning that they will take longer to run as the input size increases.

Polynomial time algorithms have a time complexity of O(n^2), meaning that they will take even longer to run as the input size increases.

In general, you want your algorithms to have a low time complexity so that they will run quickly regardless of the input size. However, there are tradeoffs involved in choosing which type of algorithm to use for each problem.

For example, some problems are easier to solve with a linear or polynomial algorithm but may take longer to run than if you were using a constant time algorithm.

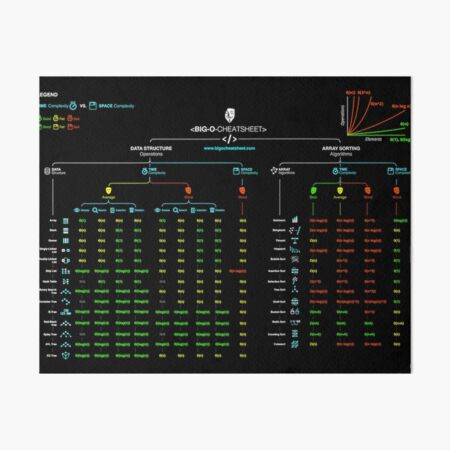

Big O Notation Calculator

If you’re a programmer, chances are you’ve heard of Big O Notation. It’s a way of describing the complexity of an algorithm, and it’s something that every programmer should understand.

There are a lot of resources out there that explain Big O Notation, but one that I particularly like is this Big O Notation Calculator.

It allows you to input an algorithm and see its complexity represented in Big O Notation.

This is a great tool for understanding how different algorithms scale. For example, let’s say you have two sorting algorithms, one that sorts in O(n) time and one that sorts in O(nlogn) time.

The latter will always be faster for large inputs, even though it has a higher asymptotic complexity.

The Big O Notation Calculator is a great tool for visualizing these differences and understanding how they work. Give it a try next time you’re trying to wrap your head around this important concept!

Credit: www.redbubble.com

How Do You Use Big-O Notation in Python?

Big-O notation is a mathematical way of representing the complexity of an algorithm. It allows us to compare different algorithms in terms of how quickly they run. The big-O notation for an algorithm is represented by a function that describes how the running time of the algorithm grows as the input size gets larger.

For example, if we have an algorithm that takes 2 seconds to run when the input size is 10, and 4 seconds to run when the input size is 100, we can say that its big-O notation is O(n^2). This means that as the input size gets larger, the running time will grow at a rate of n^2 (that is, it will take 4 times as long to run when the input size is 100 as it will when the input size is 10).

There are many different types of complexity functions that can be used to represent different algorithms, but one of the most common ones is called “big-theta” or simply “theta”.

This function gives us a tight bound on the running time of an algorithm; that is, it tells us not only how fast the algorithm runs, but also how slow it can possibly run. For example, if we know that our algorithm always takes at least 3 seconds to run regardless of input size, then we can say its big-theta notation is θ(n^3). In other words, no matter how large our inputs get, we’ll never see our algorithm running any faster than n^3.

Big-O and big-theta notation both give us valuable information about algorithms, but they should be used in different ways. Big-O notation should be used when comparing two or more algorithms; it tells us which one will scale better as inputs get larger. Big-theta notation should be used when analyzing a single algorithm; it tells us not only how fast the algorithm runs now, but also gives us some guarantee about how fast it will continue to run in the future.

What is on !) Time Complexity?

In computer science, the time complexity of an algorithm is the amount of time it takes to run in proportion to the size of the input. Time complexity is typically expressed as a function of the input size, denoted by T(n). For example, if an algorithm takes 30 seconds to sort a list of 100 items, its time complexity would be T(100) = 30 seconds.

There are two main types of time complexity: worst-case and average-case. Worst-case time complexity is the amount of time it takes for an algorithm to run in the worst possible scenario. Average-case time complexity is the amount of time it takes for an algorithm to run on average.

There are also two other important types of time complexity: amortized and expected. Amortized time complexity is a measure of how much resources (such as time or space) an algorithm uses on average over its entire execution. Expected time complexity is a measure of how long an algorithm will take to complete given any input size.

The most common way to expresstime complexityis using Big O notation. Big Onotationis a mathematical way of expressing how fast or slow an algorithms grows with respect to its inputs. For example, if an algorithms has a runtimeofO(n), that means its runtime will grow linearly with respecttoits input size n.

If you doubletheinputsize, thenyoucanexpectthealgorithm’sruntime totakeapproximatelytwicelonger!

Is Big-O Notation Difficult?

No, Big-O notation is not difficult. In fact, it’s relatively easy to understand once you get the hang of it. The main thing to remember is that Big-O notation tells you how fast an algorithm is growing as the input size grows.

There are different types of growth rates that you might see when using Big-O notation. The most common ones are linear, quadratic, and cubic. Linear growth means that the algorithm’s running time will grow at the same rate as the input size.

Quadratic growth means that the algorithm’s running time will grow at a rate proportional to the square of the input size. Cubic growth means that the algorithm’s running time will grow at a rate proportional to the cube of the input size.

Big-O notation is a way of formalizing these growth rates so that they can be compared more easily.

When we say that an algorithm has a time complexity of O(n), we’re saying that its running time will be less than or equal to some constant times n (where n is the input size). So, if we have an algorithm with a time complexity of O(n), we know that its running time will never be more than some constant number times n, no matter how large n gets.

Similarly, if we have an algorithm with a time complexity of O(n^2), we know that its running time will never be more than some constant number times n^2, no matter how large n gets.

In general, we can say that an algorithms with a time complexity of O(f(n)) will never be more than some constant number times f(n), no matter how large n gets.

How Do You Prove Big-O Notation Examples?

There are a few ways to prove Big-O notation examples. The most common way is to use the limit definition of Big-O. This states that ƒ(n) is O(g(n)) if and only if there exists a real number M > 0 and an integer N such that |f(n)| ≤ M|g(n)| for all n ≥ N.

Big O Cheat Sheet

Conclusion

In mathematics and computer science, the Big O notation is a mathematical notation that describes the limiting behavior of a function when the argument tends towards a particular value or infinity. It is usually expressed as a simple ratio: for example, “f(n) = O(g(n))” means that there exists some positive constant c such that f(n) ≤ cg(n) for all sufficiently large values of n. The set of all functions that satisfy this condition is called the Big O of g(n).

Leave a Reply